Lessons from The Bath Schools Mashup

It’s over a year since we put time aside to create our first little open data app and what started as a hacked solution to a personal problem has turned into a popular local resource.

The Bath Schools Mashup has now been used by over 3,000 people.

I’ve been pretty touched by some of the comments from local parents and since we’ve just sweated over another data update, now seems a good time to write down how we tackled the mashup and take stock of the lessons learned for other open data fans.

Background

The best projects are often borne of a purely personal need and I believe this is the best place to start on any open data project. It has to matter for it to make sense.

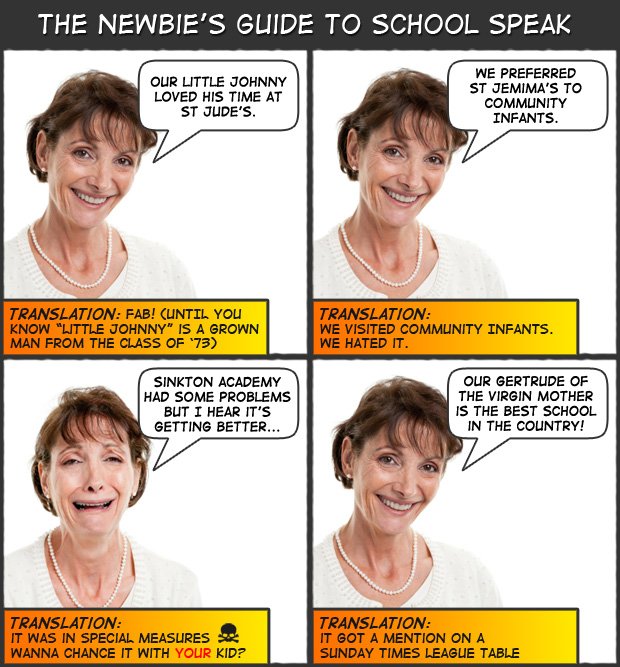

In our case our little boy had to go through the primary admissions process in Bath last year and being fairly new to the area we had no clue about local schools. Like most parents, we started asking other parents about schools and (god bless ’em) they gave us a pretty frustrating set of answers. It turns out talking honestly about education is like swinging: It only happens behind closed doors and is more urban myth than hard fact.

It’s well intentioned, but a bit useless to a local education newbie.

Time for some disclosure: Our household isn’t blessed in the education stakes. There are a few GCSE certificates somewhere in the loft and an A-Level (grade D) in Maths for me. We only achieved middle aged solvency by losing our twenties to self-education while the posh kids were on Gap Yahs and partying, so we’re (unsurprisingly) pretty keen our child doesn’t suffer the same crappy schooling we did. Catching up late in the race is not a pleasant experience. It rubs.

Open Data Step 1: Know thy limits

I love data. I also know it isn’t an end in itself; data rarely provides definitive answers.

In the case of education don’t expect statistics to provide the fullest possible picture of a school. Visiting a school and meeting staff remains a far more powerful experience than numbers ever could for what is, after all, a very personal decision. As parents we need to like the place.

I do believe good data fulfills one very important function: Data raises questions.

Visiting a school armed with quality information puts parents in the position of asking quality questions. Why do 6 in 10 kids at school A fail the 3R’s? Why should I bother applying for school B when it’s several times over-subscribed? Why does school C have an attendance record 5 times worse than school D half a mile away?

Asking teachers these questions gives them the chance to shine with great answers the data can’t provide – or mess up royally with a half baked answer that confirms you were right to worry. Either way you come away more informed than you would if you’d just strolled round for 20 minutes, nodded and then asked when lunch is served. (I’ve seen parents do this.)

So for me, the goal for good data is to shine a light on things.

What people make of what they see remains up to them. We’ll leave spin to the politicians and vested interests.

Open Data Step 2: Harvest data

Having given up on anecdotes we turned to official sources, starting with the Department for Education (henceforth DfE). Like most official data sources, it seems to have been designed by a committee the size of the politburo that involves almost everyone – except parents.

The range of data is truly mind blowing. our nearest school on this brilliant page with over 80 data points of mind-numbing joy:

The DfE website is a fine example of how clever people think providing loads of information is helpful. It isn’t. It’s confusing.

(Civil servants: Please read Nudge – Barack Obama did and he’s doing okay.)

Sourcing good data is the foundation of any open data project and it took the bulk of our time. Nothing else can happen until you have a quality dataset so if you’re tackling this yourself, set aside all thought of presentation until later. Right now, think data.

The mashup uses data from several sources. No single source was particularly useful in itself but all combined to create a picture of each school for the uninitiated:

DfE Performance Data

Aka exam results. In an effort to baffle old people the education establishment has come up with brilliant names for each part of your child’s schooling called Key Stages. I’m 39 (therefore geriatric in school years) so here’s a super fast translation for stupid people like me:

- KS2 at age 11 – 3R’s in plain english, or SATS if you’re super modern (11+ if you’re really really old). It’s the stuff kids do at the end of primary school.

- KS4 at age 16 – think GCSEs or O-levels.

- KS5 at age 18 – exams for big kids, A-levels, etc.

The DfE is getting better each year at providing the data in a form you can work with. This year’s is available in some nice big spreadsheets and all schools have a unique code (called the Establishment Number) that’s very useful for tying data sets together.

We downloaded the top line data only since our aim (see step 1) is to sketch a picture rather than replicate the official tables which are already open to eagle eyed data freaks.

Additional DfE Data

This year’s DfE performance spreadsheets are now a goldmine of extra data and were much better than what we used originally. (I was particularly intrigued by the spending figures but that’s for another day). As parents, we were drawn to the Unauthorised Absence figures. If schools have a truancy problem, it’s indicative of deeper issues and I want to know why.

The tables also contained something much simpler: School addresses. We ran these through the Google geocoder API to get a latitude/longitude which is critical in an admissions process where distance to school affects entry criteria.

OFSTED Scores

Parents love OFSTED scores because they’re simple: Schools are graded by government inspectors on a scale of 1 (outstanding) to 4 (inadequate) and these are the only metrics I’ve heard regularly in the playground. The Sunday Times combines these scores with performance data to create their (infamous?) league tables.

Delving deeper we found OFSTED scores less useful.

Many inspections are very old and it turns out schools get plenty of warning before the inspectors arrive. Four year old reports on prepped (and often panicked) staff doesn’t strike me as very robust.

Sadly OFSTED is also a long way behind other government departments in making data easily accessible in useful chunks. There is now a dedicated statistics page but the formatting is poor. We ended up hand keying the top data.

OFSTED does offer one valuable lesson: Simple scoring systems are easy for parents to access.

Local authority admissions data

Admissions data is another interesting way to profile a school because it shows which schools parents are voting for. It’s a kind of crowd sourced confidence measure and exposes what parents really think about schools, away from playground sugar coating.

There’s a more important role for this data on the mashup: It indicates to parents their real chances of getting the school of their choice.

Where schools are heavily over-subscribed, parents need to check the school admissions criteria very carefully to stand a chance of getting a place.

Unfortunately our local authority (BANES) is like many councils and has yet to embrace open data in any form. In Facts are Sacred: The Power of Data, The Guardian rails against the data obfuscation tricks national government used to play to keep information out of prying hands. BANES clearly hasn’t read this book and we could only find the information we needed in PDF form, which is unworkable. We had to hand key the data.

Open Data Step 3: Present the data

Having sourced, normalised and cleaned your data, it’s time to present it. The aim of the mashup wasn’t to be a local league table. Our aim was to give parents a quick skip into the admissions system.

The BANES admissions process is very typical of English schools and is driven primarily by distance to school “as-the-crow-flies”. In theory parents can apply for any school they like anywhere but in reality that would be pretty dumb. The chances of gaining a place will be determined by a school’s popularity and your distance to the school. (The exceptions are voluntary aided religious schools whose admissions criteria are weighted towards church goers. Most have lengthy criteria that ensure the devout from the next village always trump godless kids who live next door to the school.)

Google maps was the obvious way to present the data, allowing us to clearly show distance to your nearest schools. We then overlayed ranked tables of the key data next to each school.

The first mashup

The first mashup

With so much data available it wasn’t easy deciding what to show. We’re parents, not professional educators, so we picked stuff that answered the most obvious questions for us. The aim was to provides a quick picture of your closest schools, the chances of getting in and provide a skim of the most obvious questions:

- How far is the school?

- What are my chances of getting in? i.e. is it over/under subscribed

- How did inspectors rate the school?

- How many kids could read, write and add-up when they left? (for primaries)

- How many kids had a reasonable clutch of exams when they left? (for secondaries)

- Is there any problem with kids playing hooky?

Distances, admissions data, OFSTED, performance tables and truancy scores respectively provide a baseline answer to those questions. Further data is available at a click to those who want it.

There was one very painful omission: Progress Measures. School data now includes something called “Value Added Scores” which in essence show how far a school has taken a child on their educational journey; i.e. their progress.

Teachers love this score and so did we. It has the potential to become the holy grail of measures, offering a way to counterbalance the binary, finishing line mentality of exams. But value added scoring also has a serious drawback: Nobody gets it [outside of the education establishment].

Since the mashup is about simplifying complicated stuff and this metric is seriously hard to present and understand, we dropped it. I’d love to see someone do some work to make this usable: Teachers should hire a PR firm, get a data visualisation specialist, put signs on buses – anything. Value Added is a missing link.

Open Data Step 4: Expect some crap

The mashup has been amazingly popular and the reaction from happy parents has been heart warming. Parents are accessing important information faster.

Are they still using the official sources? Hell yes, but we’ve got them to the good stuff way quicker. At the most basic level the mashup alleviates the need to call the Local Authority just to get an accurate list of your closest schools. It also highlights the scores parents talk most about without them having to wade through 80 data points on the equivalent DfE page.

Success doesn’t mean it was an entirely smooth ride and there’s a lesson here for any open data project: Somebody is going to loathe you.

We knew league tables were controversial and thought this was a subtler approach. It turns out there’s still a vocal few who hate anything like the mashup. I thought objections would be over the choice of metrics but soon realised these were just people who hate releasing this data in any shape or form whatsoever. It turned out they were vested interests.

Whatever your choice of open data, be it educational (and therefore sadly political) or even just local bus timetables – data has a habit of making somebody look bad. You’ll take a hit for it.

The mashup has taught me to filter feedback very carefully. People who bring viable alternative ideas and suggestions to the table get heard. Noisy whiners get ignored.

“It’s easier to ask forgiveness than it is to get permission.” – Grace Hopper

Open data projects should be collaborative but when hard decisions have to be made, remember it’s your project – do what feels right to you.

Open Data Step 5: Iterate

I haven’t really done step 5 because I haven’t found time to push forward. The mashup has been tweaked where data has changed but the structure remains mostly the same.

That’s not to say we haven’t uncovered scope for vast improvement.

The mashup has proven a useful tool to lots of parents but in truth I’m disappointed it hasn’t widened engagement. Parents who already care lots about their choice of school are heavily using the mashup but parents who aren’t so bothered still aren’t using it (or anything?) at all.

The problem with data is the more complex you make it, the less people use it.

Education data seems to be stuck in the same hole food labelling has been for years: It’s just hard enough to ensure only the most dedicated people can penetrate it. I’m beginning to suspect successive governments and the teaching unions collude to make data deliberately hard and ensure parents stay away from tough questions.

An alternative approach?

An alternative approach?

If I had the time to iterate, I’d love the mashup to broaden appeal by being even simpler. No tables or percentages, we need something easier: A traffic light system? How about we repurpose the new food labelling system?

It’s probably a pipe dream; I can only imagine the arguments that would ensue over how to create a fair and simple score that looked like my totally made up label.

I do believe it’s a problem worth solving: The further we simplify, the broader the appeal and the easier it becomes for parents to ask good questions.

Lessons

- Set aside lots of time, then some more – These projects often turn out to be icebergs. Actual development time on the mashup was tiny compared to the time sourcing, understanding and (latterly) maintaining the data. This project was done in evenings and weekends, it took two months to build, and now a couple of weeks a year – every year – to update.

- Never stop learning – Critics will attempt to make you feel stupid; sometimes they’ll be right. Listen, read and learn as much as you can on your chosen topic. This will help you filter ranty noise from the valuable new perspectives you should heed.

- Be confident – I quickly discovered that many of those I first saw as “experts” turned out to be less useful on the detail. An enthusiastic amateur in the trenches is often worth more than professionals in ivory towers. Your passion counts.

- Don’t forget the problem – As you get deeper into your topic, it’s easy to forget how hard it all seemed when you were a newbie. Open data projects usually start as a solution to an everyday problem. Stay focussed on solving that problem.

And finally… help?

The mashup was way harder than it should have been because dealing with public data is still a real pain.

There are lots of well intentioned tech chat about open data and a few hack days are now centred around it. Unfortunately hacks don’t work well with icebergs: Long on talk, much shorter on real delivery.

I don’t think that will change until sourcing data gets easier. Politicians and civil servants deserve to be badgered but in truth I have no idea where to start on this – my time is sapped.

So if anyone knows a councillor, public official, civil servant, MP or educator who can grease the wheels to ensure public data is made available in a timely, consistent, useable format, I’m all ears.

Data is after all, ours.